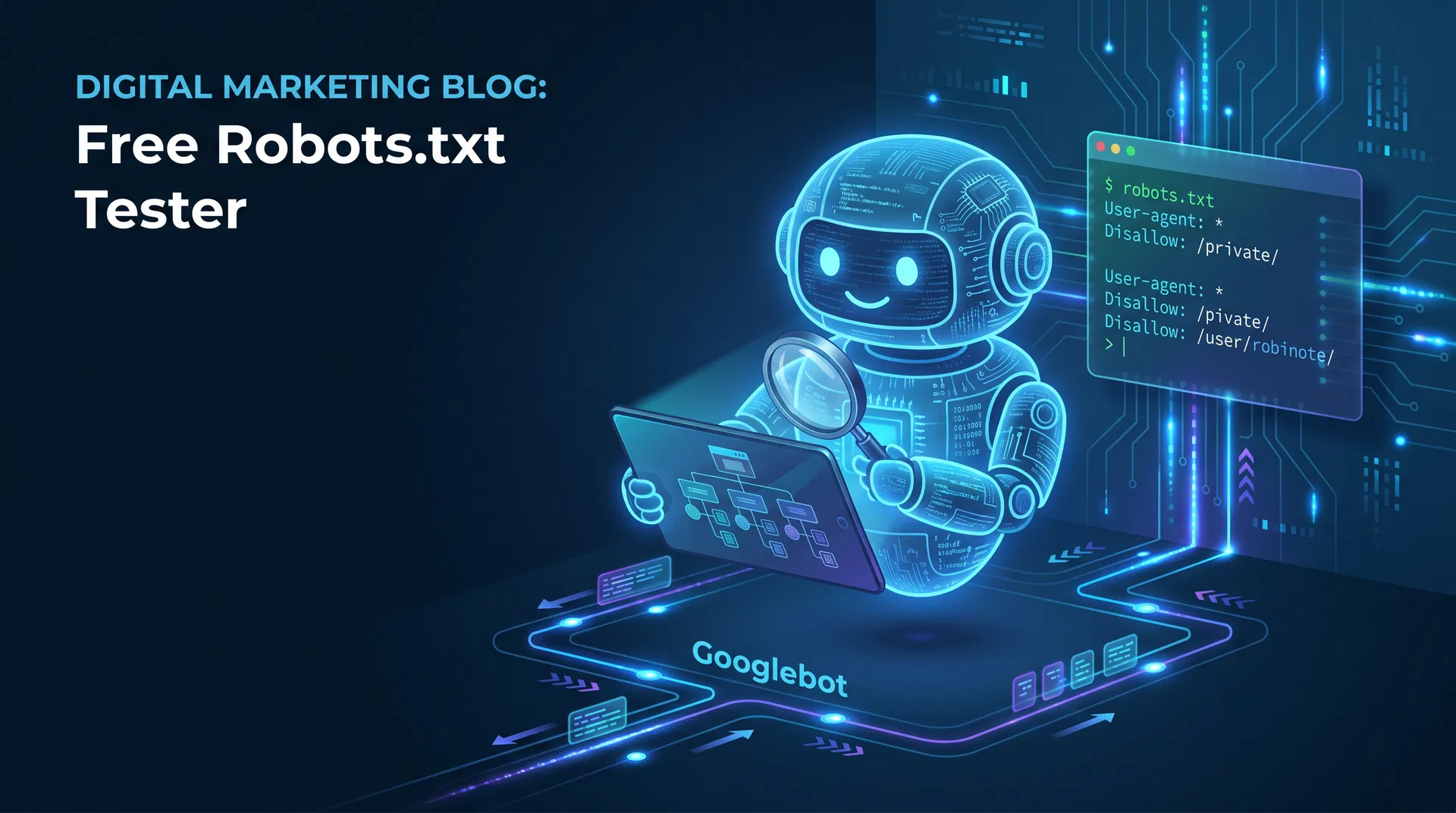

Free Robots.txt Tester: Ensure Google Can Crawl Your Website Correctly

A misconfigured robots.txt file can silently block Google from indexing your entire website. Learn how to test and fix your robots.txt with our free tool.

Free Tools to Try

Put these strategies into action — no sign-up required

Want to learn more? Read the Free Keyword Checker guide.

Related Articles

View all

Optimised Marketing Team

AI Marketing ExpertLee Evans is the founder of Optimised Marketing, a UK-based AI-first digital marketing agency. With over a decade of experience in SEO, PPC, and marketing automation, Lee specialises in combining AI tools with human strategy to deliver measurable results for businesses of all sizes. He has helped 100+ companies improve their online visibility and generate qualified leads through data-driven marketing.

Ready to Transform Your Marketing with AI?

Book a free, no-obligation consultation with our AI marketing experts. We'll analyse your current marketing, identify opportunities, and show you exactly how AI can accelerate your growth.

No sales pressure. No commitment. Just actionable insights you can use immediately.